Source: Mike via Adobe Stock

Deepfake creation software is proliferating on the Dark Web, enabling scammers to carry out artificial intelligence (AI)-assisted financial fraud with previously unheard of creativity and scope.

Consider what happened a few weeks back, when a Hong Kong-based employee in the finance department of a multinational corporation received a message. It was his company's UK-based CFO, asking him to carry out a transaction. As reported by The South China Morning Post, the employee had an initial "moment of doubt" about the request, until something changed his mind.

Not long after the initial message, the employee got on a video conference call with that CFO, alongside a roster of other colleagues. They all looked and sounded like the people he knew. He was asked to give a short introduction to the group, then he was given instructions, and the meeting ended abruptly thereafter.

In the week that followed, the employee continued to discuss the transactions with his "colleagues" over instant messaging, email, and one-on-one calls. By the time the deepfake ruse was revealed, he'd already made 15 transactions totaling $25.5 million (about 200 million Hong Kong dollars).

The Flourishing Market for Deepfake Software

Deepfakes — good ones, too — have been around for some time now. "This is something that people have been able to do for a number of years. I think it was December 2020 when I first said, having spent a decade looking at fakes, that I could no longer tell the difference," says Dominic Forrest, CTO of iProov.

What's changed is the fact that they've become more available to a wider audience, with a lower barrier to entry.

Back in the 2010s, he explains, "it was a highly skilled job, which would require a lot of processing, and it was very difficult to do. Today, there are many simple tools on the market that people can download either free of charge or for a few tens of dollars, that create quality deepfakes of people just from some a single reference image from a LinkedIn profile, or a Facebook page or Twitter, wherever it may be."

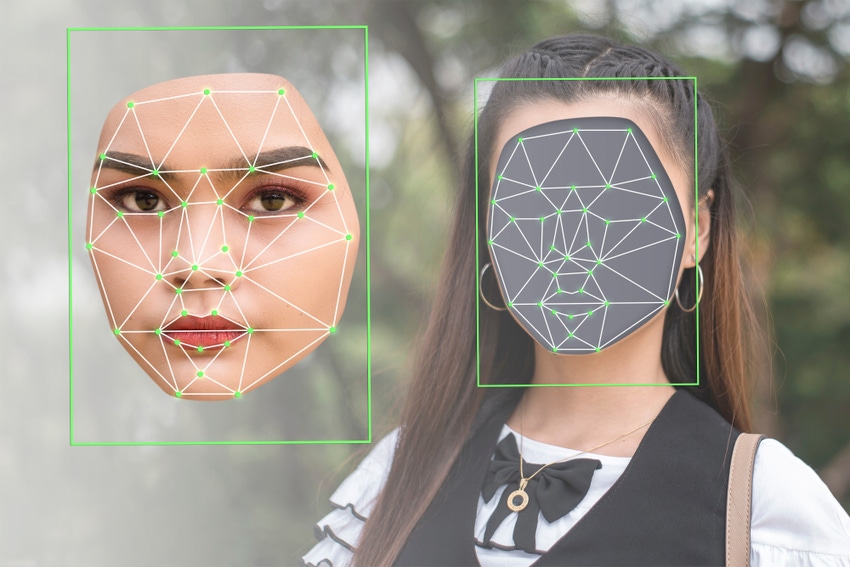

Face swapping, for example, has become utterly commonplace. For a report set to release on Wednesday, iProov has tracked more than 100 separate tools on the market today designed for creating simple face swaps.

More advanced offerings are out there, too, like OnlyFake, a Dark Web service that can produce a realistic fake ID in an instant, or many of them at scale, for just $15 each. A recent post to its Telegram account heralds the sea change, stating that "The era of rendering documents using Photoshop is coming to an end."

These same advancements in quality and accessibility have allowed for a flourishing of above-board deepfake products, as well. The Hollywood strikes in 2023 were driven in part by concerns over this technology's application to movies and TV that might make extras obsolete, and the Chinese multimedia giant Tencent now offers a commercial deepfake service capable of creating high-definition, realistic human fakes using just three minutes of live action video and 100 spoken sentences as source material.

The Easy Solutions to Deepfake Detection, & the Hard Ones

Much of the discourse around deepfake security focuses on identifying idiosyncrasies in its end product: the imperfections in a fake image, the lack of resonance that might give away an AI-generated voice, and other technical shortcomings that a human or anti-deepfake software might be able to flag as suspicious.

Because the technology is improving so fast, though, this is becoming more and more difficult to do by the day.

"A lot of people suggest things like: get people to take their glasses off and back on, and you'll be able to spot if it's a deepfake. That is just no longer true. As a human being, you cannot tell the difference," Forrest says.

Trying to beat the software may be one worthwhile approach, says Kevin Vreeland, general manager of North America at Veridas. But he offers an even simpler, more reliable alternative for dealing with deepfakes at a more fundamental level: Instead of constantly asking whether everything is real, companies can instead focus on preventing synthetics from reaching employees in the first place.

"Maybe they're calling from an unknown phone number," he says of deepfake attackers, "or calling from an unknown location. You can even require people to turn on geolocation on their devices in order to make these big money transfers. Once you start to break all that down, you're going to see that the data that comes with it isn't legitimate, and that's where you have to start questioning and flagging."

Until detection tech catches up, it's this more basic metadata that makes for easier pickings. Because while their deepfakes are remarkably true-to-life, Vreeland points out, "these deepfake attackers can't cover all of their bases."

10 months ago

38

10 months ago

38